The Polish Supervisory Authority (UODO), Poland’s data regulator, said it is considering launching an inquiry into ChatGPT maker OpenAI. The statement comes after a citizen alleged the tool generated false information about him, raising questions about how OpenAI’s algorithm processes personal data.

The plaintiff alleges ChatGPT used 2021 data it obtained outside of EU privacy laws to generate an untrue output. It also did not provide the source of data, which the citizen says demands a closer inspection of OpenAI’s data processing methods.

Polish Regulator to Pursue OpenAI Inquiry

Moreover, questions directed at OpenAI did not provide satisfactory answers. In response to the complaint, UODO’s Deputy President, Jakub Groszowski, affirmed the plaintiff’s right to recourse and emphasized the duty of data privacy watchdogs.

“The development of new technologies must take place with respect to the rights of natural persons resulting from, among others, [General Data Protection Regulation]. The task of European personal data protection authorities is to protect European citizens against the negative effects of information processing technology.”

He said the complaint raised doubts on whether OpenAI complied with GDPR’s core principle of “privacy by design.” In response, UODO will attempt to clarify OpenAI’s data policies, UODO’s president confirmed.

“The case concerns the violation of many provisions on the protection of personal data, which is why we will ask OpenAI for an answer to a number of questions to be able to thoroughly carry out administrative proceedings.”

The EU incorporated artificial intelligence (AI) safeguards into its GDPR law in May 2018.

Learn here how decentralized identities can safeguard personal information.

At the time, its requirement for companies to explain how they use personal information in artificial intelligence was criticized. Early commentators suggested it was nearly impossible for companies to know whether they were fully compliant.

Read more here about decentralized social media platforms that less reliant on your data than their centralized rivals.

Moreover, the laws were believed to be a drag on innovation. At the time, 74% of respondents to a German survey of the digital industry said privacy laws were the main roadblock in developing new technologies.

New Legislation May Fix Poor Handling of Personal Data

But there are some fundamental issues regarding automated decision-making that have been carried over from earlier AI models.

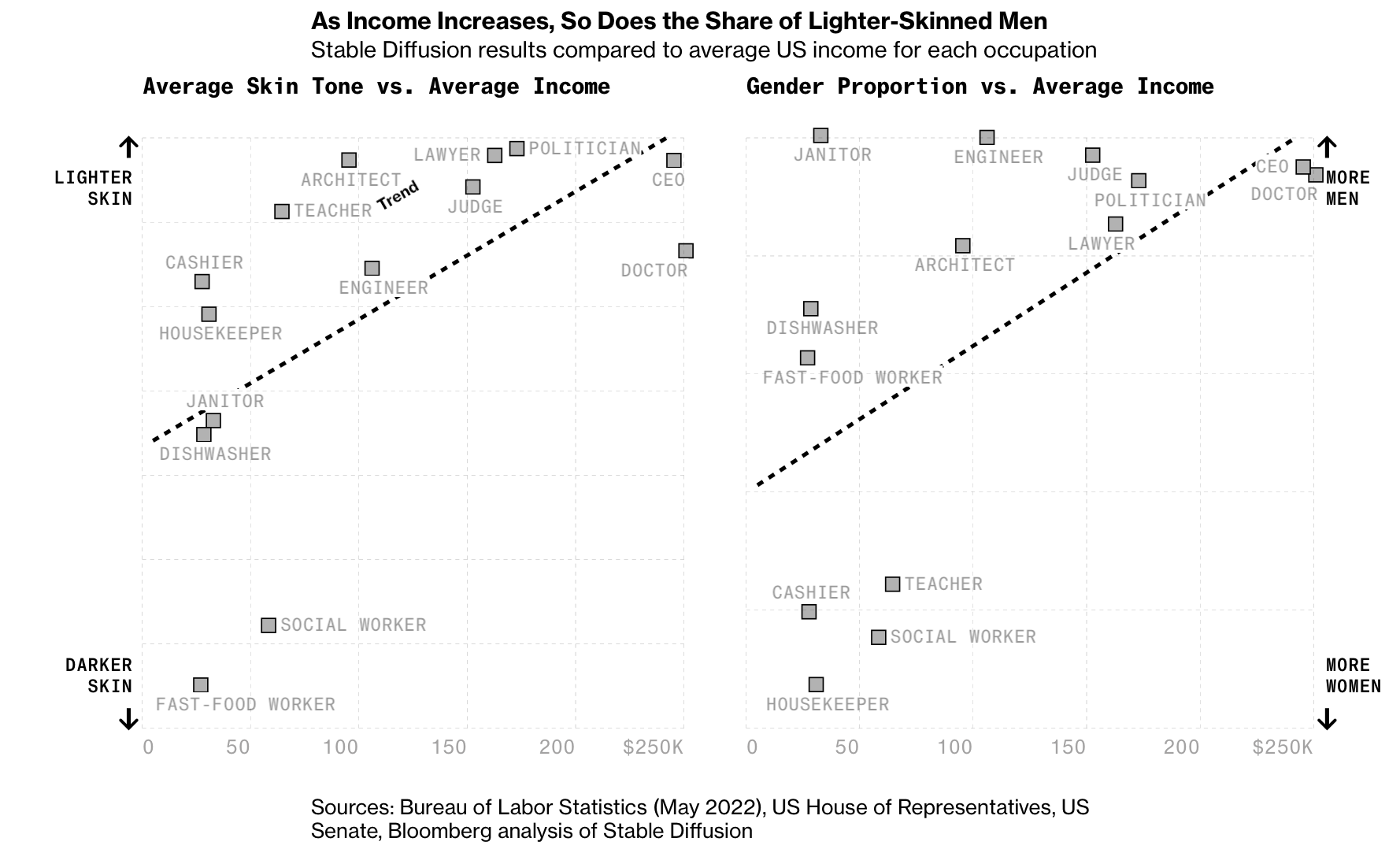

Early machine learning models, the building blocks of large-language models, were criticized for their tendencies to interpret personal data in a way that reinforced societal ills. For example, a model could perpetuate prejudices in recruitment by underweighting female candidates for computer programming jobs.

The recent COVID-19 pandemic prompted many governments to speed up or altogether bypass due diligence in favor of speed. Many companies used the pandemic to push AI, automation, and surveillance tools in favor of the greater good.

A system created by exam department Ofqual attempted to algorithmically prevent the inflation of British school results. Instead, it discriminated against genuine high achievers and children from less-advantaged backgrounds.

Later this year, British Prime Minister Rishi Sunak will host a summit of AI executives and researchers to decide on the future of AI. The European Parliament could pass the EU’s Artificial Intelligence Act by the end of the year.

Do you have something to say about UODO’s intention to launch an inquiry into OpenAI’s handling of personal data in its algorithm or anything else? Please write to us or join the discussion on our Telegram channel. You can also catch us on TikTok, Facebook, or X (Twitter).