Artificial intelligence now has the potential to convert ‘non-invasive’ thoughts into texts. According to the latest report, researchers are leading the way toward achieving this milestone.

Artificial intelligence (AI) is increasingly used to translate brain activity into a continuous text stream. This has the potential to revolutionize the field of communication for those with severe neurological conditions. AI has great potential in interpreting brain activity, particularly in the context of neuroimaging techniques.

Tapping New Opportunities With AI

In the latest development, an AI-based semantic decoder showed innovative ways to translate brain activities into a continuous array of texts.

For the very first time, this breakthrough would allow or convert ‘non-invasive’ thoughts into texts. This could significantly aid those struggling to communicate following a stroke or motor neuron disease.

Interpreting brain activity requires sophisticated data analysis techniques to extract meaningful information from complex and noisy data. AI algorithms can help to automate and streamline this process. Consequently, allowing researchers to make more accurate and reliable inferences about brain function.

Herein, the decoder could accurately reconstruct speech while respondents listened to or imagined a story. Indeed a massive leap in innovation compared to past language decoding systems that incorporated surgical implants.

Renowned scientists have supported the latest development as it overcame the major obstacle. Frontrunning the research is Dr. Alexander Huth, a University of Texas-based neuroscientist added:

“For a non-invasive method, this is a real leap forward compared to what’s been done before, which is typically single words or short sentences.”

AI Overcoming Setbacks

Functional magnetic resonance imaging (fMRI) measures changes in blood flow to different areas of the brain, which can be used to infer neural activity. However, this process is relatively slow compared to the actual firing of neurons in the brain.

The time resolution of fMRI is typically on the order of seconds, meaning it cannot capture rapid changes in brain activity. This makes it challenging to analyze brain activity in response “to natural speech because it gives a ‘mishmash of information’ spread over a few seconds,” according to researchers.

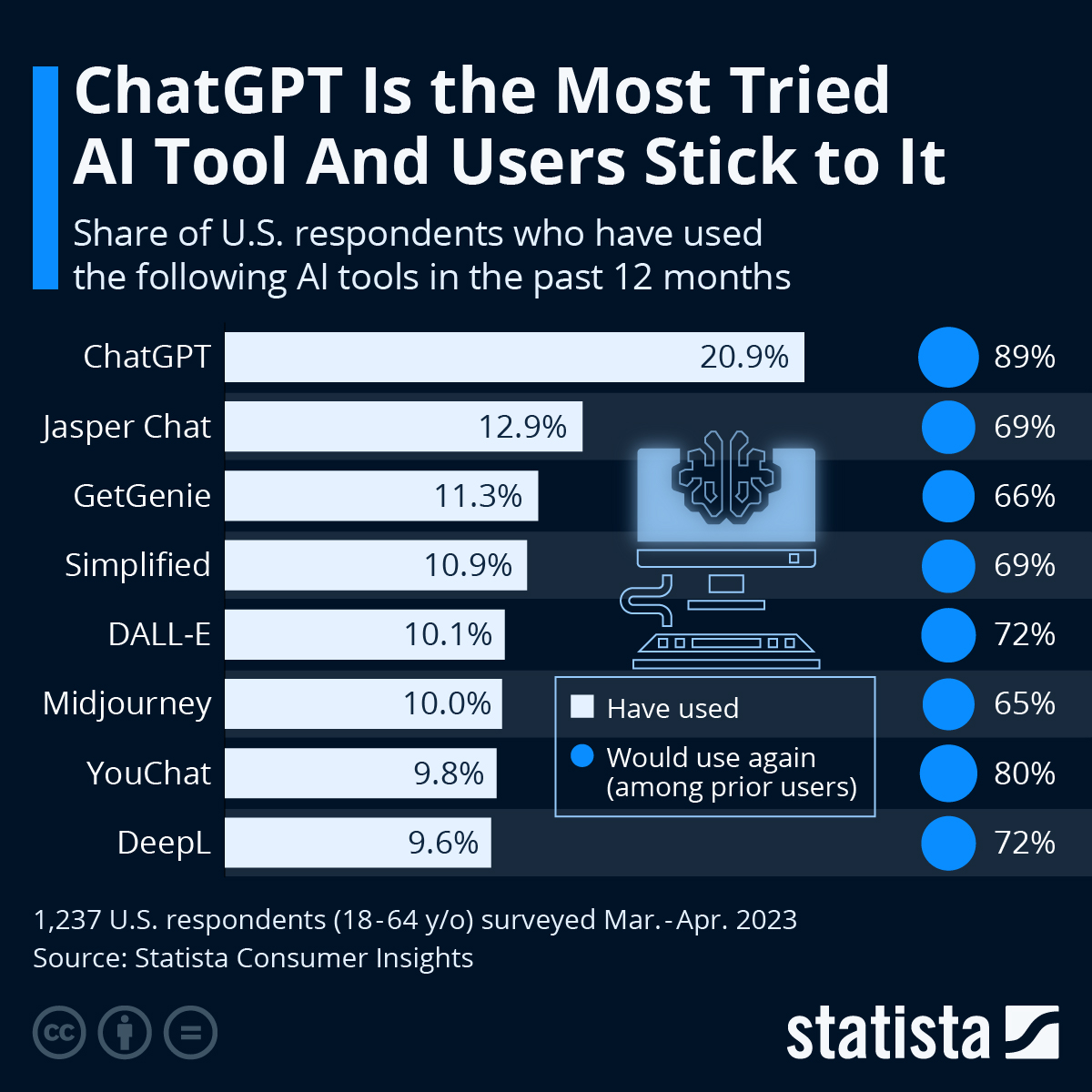

The advent of large language models like OpenAI’s ChatGPT has been a significant development in artificial intelligence. These models are trained on vast amounts of text data, enabling them to generate human-like responses to a wide range of inputs.

In this case, it allowed researchers to look at the semantic meaning of speech. That is, to understand the neuronal activity patterns corresponding to a string of words.

Following the breakthrough, the concerned group aims to bolster the utility of using the technique in other, more portable brain-imaging systems, such as functional near-infrared spectroscopy (fNIRS).

But again, security fears may arise following the rise in the latest innovation.