Amazon will invest $1.25 billion in artificial intelligence (AI) safety-focused startup Anthropic as it seeks to boost its presence in the battle for AI supremacy. The deal could expand to $4 billion if certain conditions are met, according to people familiar with the matter.

The move will give engineers at the world’s largest on-demand storage provider access to large language models similar to ChatGPT. Amazon also gave customers access to various learning models recently through its Bedrock platform.

Amazon to Focus on Corporate Use Cases

If it reaches $4 billion, the deal would mark Amazon’s biggest investment directly related to its cloud infrastructure provider, Amazon Web Services (AWS). By comparison, Microsoft invested $10 billion in OpenAI, the company responsible for the development of ChatGPT, in return for a 49% stake.

Earlier this year, AWS CEO Adam Selipsky said the company wanted customers to choose the models they wanted to use.

“We firmly believe there will not be one model to rule them all.”

Anthropic also secured $300 million from its AI rival, Google, and additional funding from customer relations conglomerate Salesforce. Anthropic’s Claude 2 AI assistant competes with advanced AI models like ChatGPT and Google’s Bard.

Read More: Best ChatGPT Alternatives You Can Use in 2023

Anthropic Links to Sam Bankman-Fried

Anthropic’s founders, siblings Dario and Daniela Amodei, left OpenAI in 2021 over concerns the company had become too commercial. After studying the potential of models used at OpenAI, the pair started the company to focus on advancing AI safety.

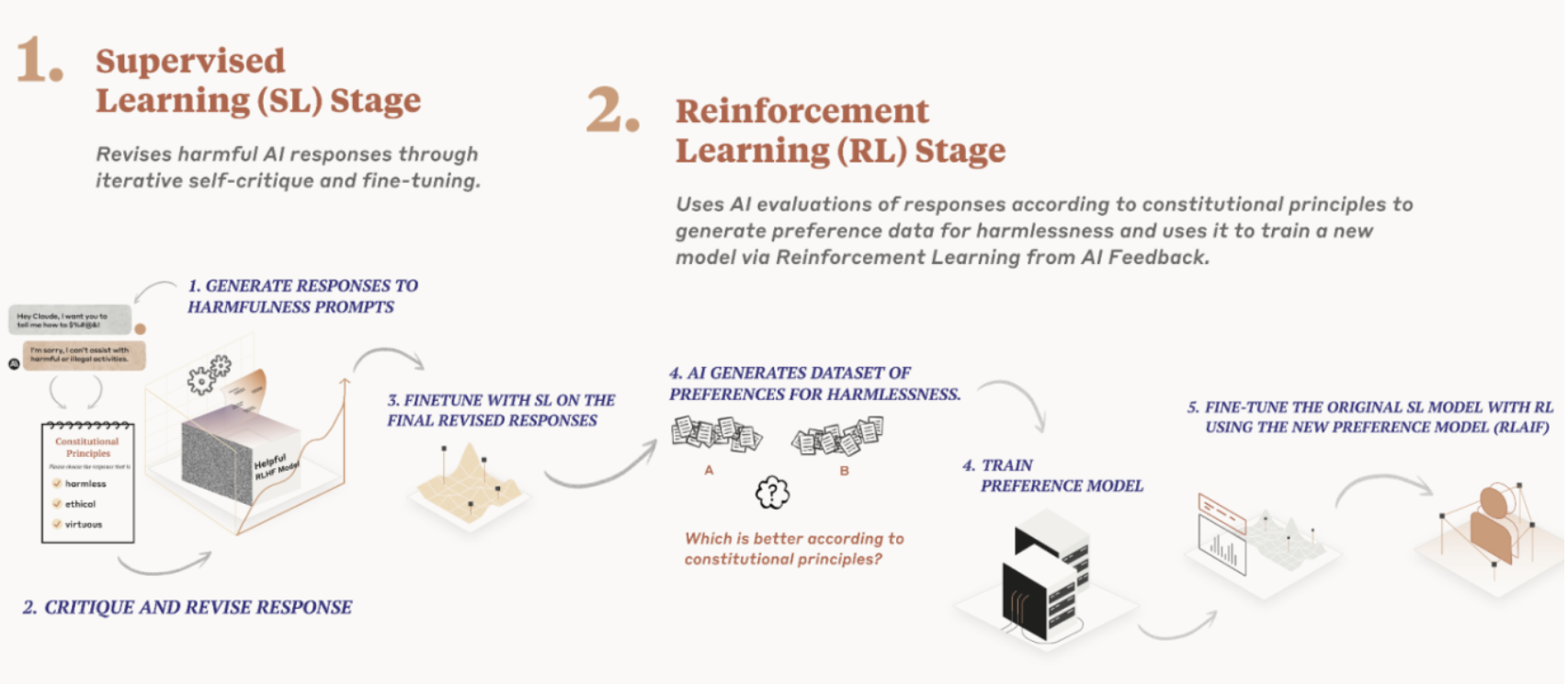

Claude processes requests according to a set of principles known as Constitutional AI, while a second model evaluates how the first model follows the rules. The result is an AI assistant whose propensity for abuse is lowered, the company argues.

The company’s emphasis on safety stems from its early focus on effective altruism, a data-driven approach to charitable causes. Arguably, its most famous proponent was former FTX CEO Sam Bankman-Fried, whose crypto empire invested $500 million in Anthropic.

Read more: FTX Collapse Explained: How Sam Bankman-Fried’s Empire Fell

Anthropic reportedly has ties to FTX’s bankruptcy estate and has since distanced itself from the effective altruism movement. Anthropic critics argue the company should stop making more powerful AI models that could cause the AI disaster they are trying to prevent.

Do you have something to say about the recent investment by Amazon in Anthropic, its commitment to AI safety, or anything else? Please write to us or join the discussion on our Telegram channel. You can also catch us on TikTok, Facebook, or X (Twitter).